In 2017, I set out to create the **fastest** CSV viewer known to the Internet. I searched for alternatives to Excel to make sure the my problem wasn't already solved. I found some that were IDE specific, for IDE's that I don't use. I found some that were Electron based, but Electron's startup time was slow back then. And there were some open source options, but they didn't scratch the itch. Many of the tools still required me to know the structure of the file before opening it.

## My Deepest Needs

- **Speed!** I need to view the data before it finishes loading it.

- **Good Guessing**. I don't want to pick the column separator,line delimiter, or quoting before preview.

- **Fault tolerance**. Let me see the data even if the guessed format is bad so I can be more informed for the next attempt.

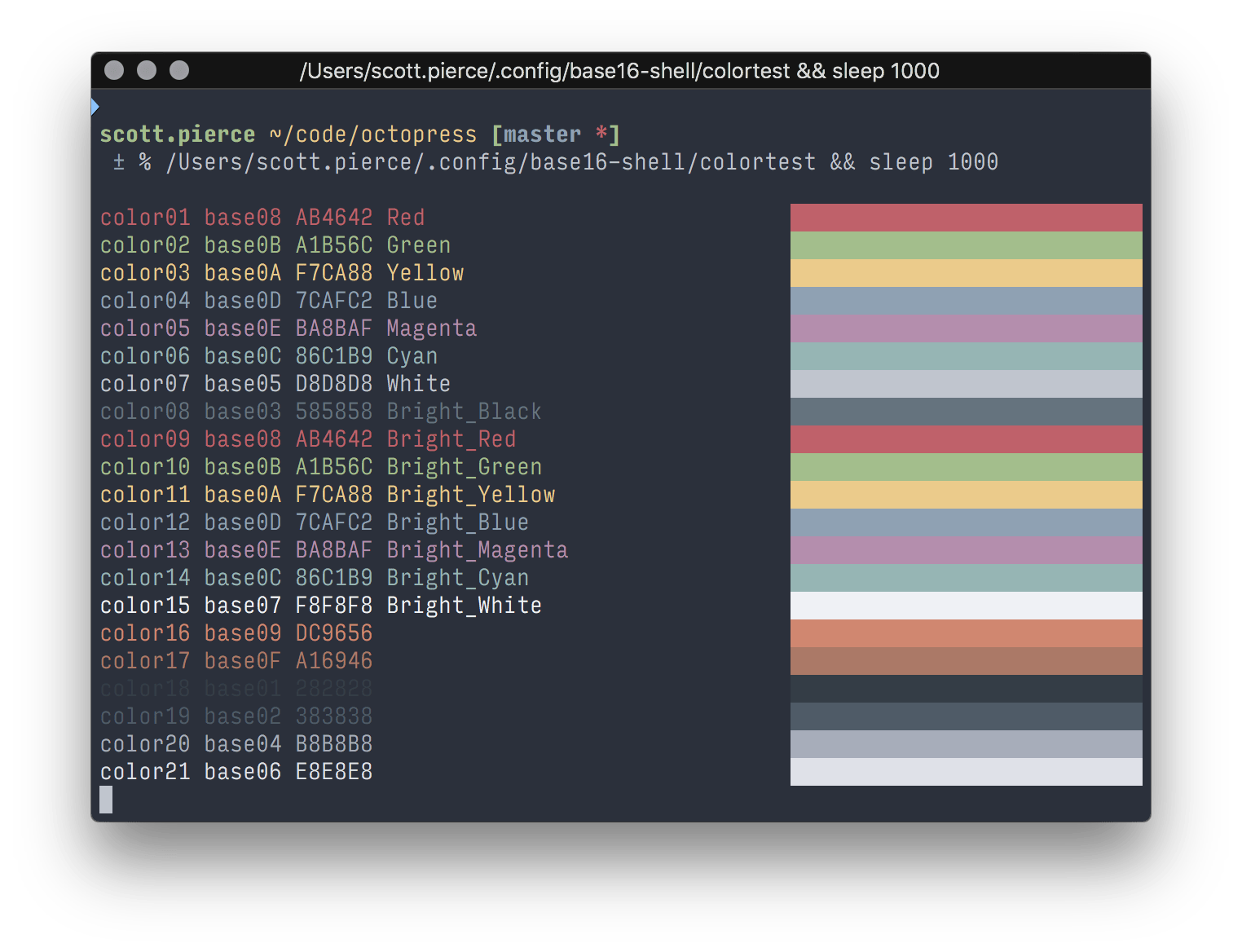

## Command Line Junky

Did you know CSVs can be read from command line? This is the way I lived before Golumn. If you're in a POSIX (Linux, Mac, Unix) environment, you can try:

```sh

head -100 /path/to/file.csv | column -ts, | less -S

```

**Command Breakdown**:

* `head -100 /path/to/file` get the first 100 lines of a file

* `column -ts,` print in table `-t` format separated by comma `-s,`

* `less -NS` paginate the result with line numbers `-N` and horizontal scrolling `-S`

The command line way, checks all 3 needs. It's fast, it let's me see results, and it's fault tolerant. It _may_ look like crap if the delimiter is wrong, but that's easy to remediate with different flags, and because I can see something, it allows for quick adjustments. For instance, `column -t-s\|` would split on pipe instead of comma.

This is nice and all, but every once in a while, a graphical interface is needed to get a better feel for the data. GUIs provide for smooth scrolling and higher resolution than the terminal. It's also nice to have numbers aligned properly and clickable things.

## Golumn is Born

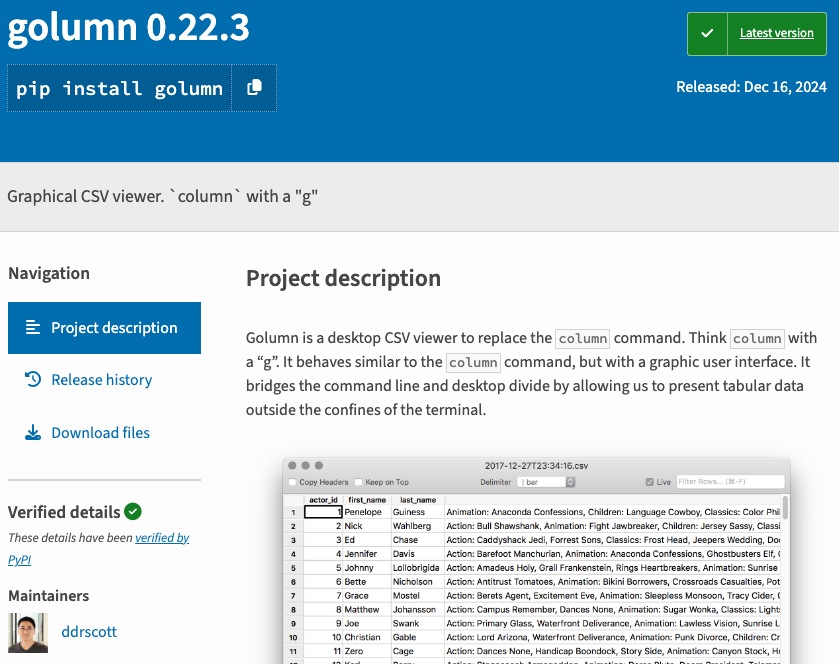

Golumn is a Python project built with wxPython built on wxWidgets. It leverages Python's CSV sniffer and SQLite for data processing.

https://pypi.org/project/golumn/

In 2017, I set out to create the **fastest** CSV viewer known to the Internet. I searched for alternatives to Excel to make sure the my problem wasn't already solved. I found some that were IDE specific, for IDE's that I don't use. I found some that were Electron based, but Electron's startup time was slow back then. And there were some open source options, but they didn't scratch the itch. Many of the tools still required me to know the structure of the file before opening it.

## My Deepest Needs

- **Speed!** I need to view the data before it finishes loading it.

- **Good Guessing**. I don't want to pick the column separator,line delimiter, or quoting before preview.

- **Fault tolerance**. Let me see the data even if the guessed format is bad so I can be more informed for the next attempt.

## Command Line Junky

Did you know CSVs can be read from command line? This is the way I lived before Golumn. If you're in a POSIX (Linux, Mac, Unix) environment, you can try:

```sh

head -100 /path/to/file.csv | column -ts, | less -S

```

**Command Breakdown**:

* `head -100 /path/to/file` get the first 100 lines of a file

* `column -ts,` print in table `-t` format separated by comma `-s,`

* `less -NS` paginate the result with line numbers `-N` and horizontal scrolling `-S`

The command line way, checks all 3 needs. It's fast, it let's me see results, and it's fault tolerant. It _may_ look like crap if the delimiter is wrong, but that's easy to remediate with different flags, and because I can see something, it allows for quick adjustments. For instance, `column -t-s\|` would split on pipe instead of comma.

This is nice and all, but every once in a while, a graphical interface is needed to get a better feel for the data. GUIs provide for smooth scrolling and higher resolution than the terminal. It's also nice to have numbers aligned properly and clickable things.

## Golumn is Born

Golumn is a Python project built with wxPython built on wxWidgets. It leverages Python's CSV sniffer and SQLite for data processing.

https://pypi.org/project/golumn/

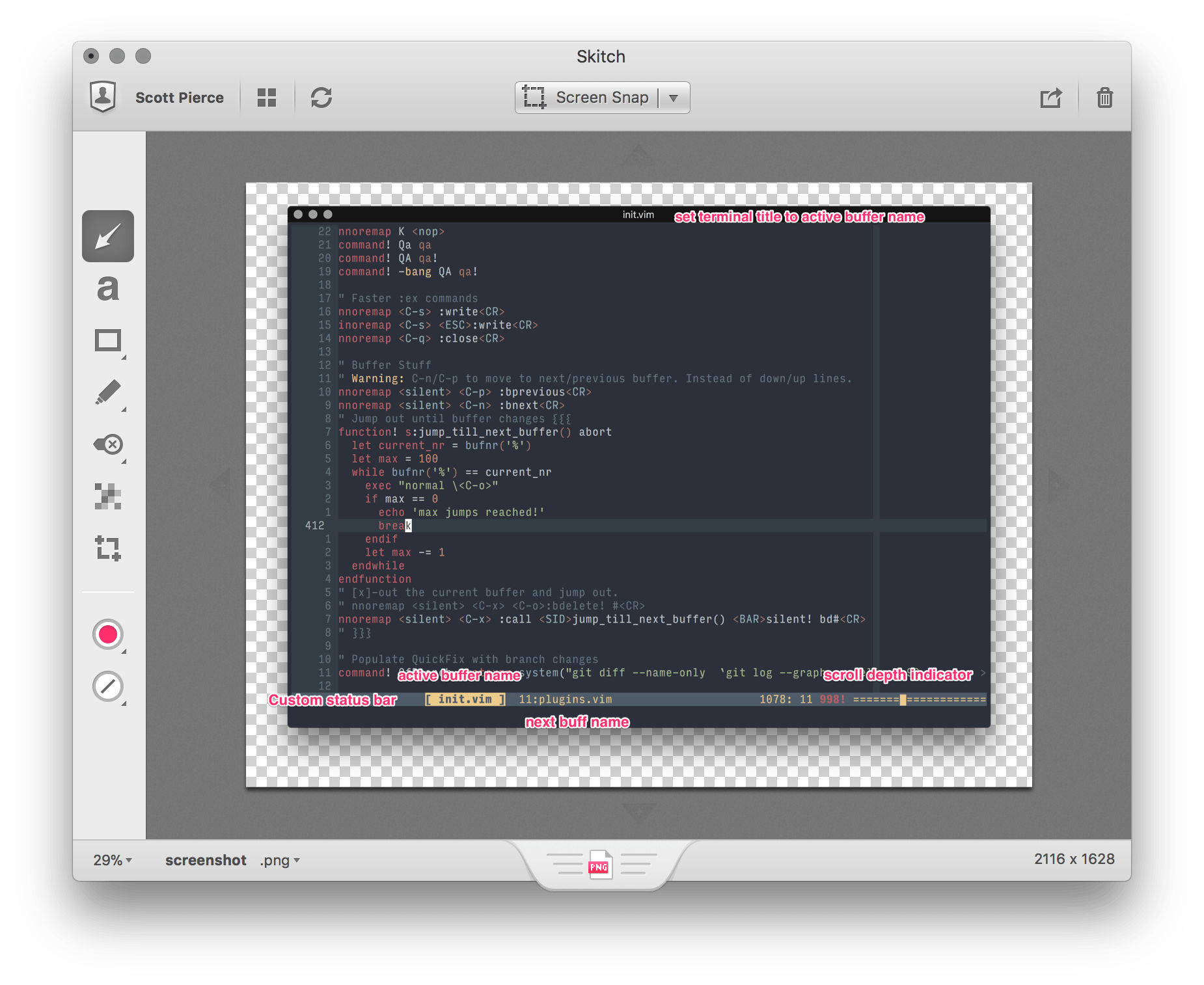

The command, `golumn /path/to/file.csv`, will open a GUI Window showing data in 0.1 seconds with a simple grid of data scrollable till my fingers get tired.

Overtime, I've added keyboard shortcuts to quick filter/unfilter data based on the current selection, sort columns, and search and filter any column for a piece of text, but at it's core, it's a sharp tool that does 1 thing well: **View CSV files**

## Open Source and Legacy

[Golumn](https://github.com/ddrscott/golumn) is open source. One day, someone other than myself can contribute to it. Someone other than myself could have the same impatience for CSV load times. Someone could have similar hotkey preferences. Or maybe not. I don't put it out there for fame or fortune. I but it out there because it's my favorite pet project and everyone should have a change to play with it. `Golumn` is buddy that has served me well for 7+ years, and I want it to have a home beyond me. History is made by sharing, not hoarding.

## Conclusion

Thanks for getting this far. I hope you find Golumn useful, and reach out to me if you have any questions or

suggestions. I'm always looking for ways to make it better.

---

# Bowling Scores the SQL Way

Date: 2024-10-09

URL: https://ddrscott.github.io/blog/2024/sql-bowling/

Tags: sql

Description: How to calculate bowling scores using SQL. No Store Procedures allowed!

The scoring rules for American Bowling are simple on paper, but can be a chore to calculate by hand. This is a fun exercise to calculate the scores in mass using SQL. Because if you can't do it in SQL, you're life is too easy!

If you don't know the rules, here's a quick summary: https://www.kidslearntobowl.com/how-to-keep-score/

## Data Preparation

Making a table to store the raw data for the individual games.

We're not going into full data modelling by adding players and timestamps. We're keeping it simple for this example.

```sql

DROP TABLE IF EXISTS game_rolls;

CREATE TABLE game_rolls (

game int,

frame int,

roll int,

pins int

);

```

### Add a Perfect Game

A perfect game is 12 strikes in a row. 10 strikes in the first 9 frames and 2 strikes in the 10th frame.

```sql

-- Perfect Game

-- Total: 300

INSERT INTO game_rolls (game, frame, roll, pins)

VALUES

(1, 1, 1, 10),

(1, 2, 1, 10),

(1, 3, 1, 10),

(1, 4, 1, 10),

(1, 5, 1, 10),

(1, 6, 1, 10),

(1, 7, 1, 10),

(1, 8, 1, 10),

(1, 9, 1, 10),

(1, 10, 1, 10),

(1, 10, 2, 10),

(1, 10, 3, 10);

```

### Add an Unlucky Second Game

We managed to knock down 1 pin each frame and missed the second roll. Repeat this for all 10 frames.

```sql

-- 1 pin each frame.

-- Total: 10

INSERT INTO game_rolls (game, frame, roll, pins)

VALUES

(2, 1, 1, 1),

(2, 1, 2, 0),

(2, 2, 1, 1),

(2, 2, 2, 0),

(2, 3, 1, 1),

(2, 3, 2, 0),

(2, 4, 1, 1),

(2, 4, 2, 0),

(2, 5, 1, 1),

(2, 5, 2, 0),

(2, 6, 1, 1),

(2, 6, 2, 0),

(2, 7, 1, 1),

(2, 7, 2, 0),

(2, 8, 1, 1),

(2, 8, 2, 0),

(2, 9, 1, 1),

(2, 9, 2, 0),

(2, 10, 1, 1),

(2, 10, 2, 0);

```

### Add Gutter Ball and Spare

Now, we're showing off our skills. Throw the first ball into the gutter on purpose and take down the rest in the second roll for a spare.

```sql

-- 0 then 10 each frame

-- Total: 100

INSERT INTO game_rolls (game, frame, roll, pins)

VALUES

(3, 1, 1, 0),

(3, 1, 2, 10),

(3, 2, 1, 0),

(3, 2, 2, 10),

(3, 3, 1, 0),

(3, 3, 2, 10),

(3, 4, 1, 0),

(3, 4, 2, 10),

(3, 5, 1, 0),

(3, 5, 2, 10),

(3, 6, 1, 0),

(3, 6, 2, 10),

(3, 7, 1, 0),

(3, 7, 2, 10),

(3, 8, 1, 0),

(3, 8, 2, 10),

(3, 9, 1, 0),

(3, 9, 2, 10),

(3, 10, 1, 0),

(3, 10, 2, 10);

```

### Add Realistic Game with Strikes and Spares

This is my typical night out, never quite getting halfway to perfection (300). If I spent more time bowling and less time writing SQL maybe I'd get better!

```sql

INSERT INTO game_rolls (game, frame, roll, pins)

VALUES

(4, 1, 1, 7), -- First frame: 7 pins

(4, 1, 2, 2), -- Spare with 2 pins => = 9

(4, 2, 1, 10), -- Second frame: Strike => 9+10+5+4=28

(4, 3, 1, 5), -- Third frame: 5 pins

(4, 3, 2, 4), -- Open frame with 4 pins => 28+9=37

(4, 4, 1, 8), -- Fourth frame: 8 pins

(4, 4, 2, 1), -- Open frame with 1 pin => 37+9=46

(4, 5, 1, 10), -- Fifth frame: Strike => 46+10+6+3=65

(4, 6, 1, 6), -- Sixth frame: 6 pins

(4, 6, 2, 3), -- Open frame with 3 pins => 65+9=74

(4, 7, 1, 9), -- Seventh frame: 9 pins

(4, 7, 2, 0), -- Open frame with 0 pins => 74+9=83

(4, 8, 1, 10), -- Eighth frame: Strike => 83+10+9=102

(4, 9, 1, 7), -- Ninth frame: 7 pins

(4, 9, 2, 2), -- Open frame with 2 pins => 102+9=111

(4, 10, 1, 10), -- Tenth frame: Strike

(4, 10, 2, 10), -- Bonus roll: Strike

(4, 10, 3, 8); -- Bonus roll: 8 pins => 111+10+10+8=139

```

## Query to Calculate Scores

There are many ways to calculate the scores.

We're going to make use of [Window Functions](https://www.postgresql.org/docs/current/tutorial-window.html) to look ahead for bonus pins and look behind for spares.

```sql

SELECT

*,

COALESCE(LEAD(pins, 1) OVER (w), 0) AS next_pins,

COALESCE(LEAD(pins, 2) OVER (w), 0) AS next_next_pins,

roll = 1 AND pins = 10 AS is_strike,

roll = 2 AND (pins + LAG(pins, 1) OVER (w) = 10) AS is_spare

FROM game_rolls

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

;

```

**Results**:

|game|frame|roll|pins|next_pins|next_next_pins|is_strike|is_spare|

|----|-----|----|----|---------|--------------|---------|--------|

| 4| 1| 1| 7| 2| 10|false |false |

| 4| 1| 2| 2| 10| 5|false |false |

| 4| 2| 1| 10| 5| 4|true |false |

| 4| 3| 1| 5| 4| 8|false |false |

| 4| 3| 2| 4| 8| 1|false |false |

| 4| 4| 1| 8| 1| 10|false |false |

| 4| 4| 2| 1| 10| 6|false |false |

| 4| 5| 1| 10| 6| 3|true |false |

| 4| 6| 1| 6| 3| 9|false |false |

| 4| 6| 2| 3| 9| 0|false |false |

| 4| 7| 1| 9| 0| 10|false |false |

| 4| 7| 2| 0| 10| 7|false |false |

| 4| 8| 1| 10| 7| 2|true |false |

| 4| 9| 1| 7| 2| 10|false |false |

| 4| 9| 2| 2| 10| 10|false |false |

| 4| 10| 1| 10| 10| 8|true |false |

| 4| 10| 2| 10| 8| 0|false |false |

| 4| 10| 3| 8| 0| 0|false |false |

That looks right. The future pins are correct and the strikes and spares are correctly identified.

If we wanted, we could make a view, but we're going to continue with the query by using [Common Table Expressions

(CTE)](https://www.postgresql.org/docs/current/queries-with.html).

We'll call the first CTE `stats` and build on it with the next CTE to calculate the bonus based on the rules of

strikes and spares. Strikes get the next two rolls and spares get the next roll.

There is a special condition for the last frame. We accept the pins as final since there are no future frames to

look at. We don't have to 'wait' to score the last frame. We can score it immediately.

> I have to admit, this was the hardest part to figure out and I was stuck on it for longer than I'd like to admit.

> The perfect game scenario was totalling 320 for the longest time! (Perfect games of 12 strikes in a row are 300 points, not 320)

```sql

WITH stats AS (

SELECT

*,

COALESCE(LEAD(pins, 1) OVER (w), 0) AS next_pins,

COALESCE(LEAD(pins, 2) OVER (w), 0) AS next_next_pins,

roll = 1 AND pins = 10 AS is_strike,

roll = 2 AND (pins + LAG(pins, 1) OVER (w) = 10) AS is_spare,

frame = (max(frame) OVER (PARTITION BY game)) AS is_last_frame

FROM game_rolls

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

)

SELECT

*,

CASE

WHEN is_last_frame THEN pins

WHEN is_strike THEN pins + next_pins + next_next_pins

WHEN is_spare THEN pins + next_pins

ELSE

pins

END

AS score

FROM stats

;

```

**Results with pins per frame**:

|game|frame|roll|pins|next_pins|next_next_pins|is_strike|is_spare|is_last_frame|score|

|----|-----|----|----|---------|--------------|---------|--------|-------------|-----|

| 4| 1| 1| 7| 2| 10|false |false |false | 7|

| 4| 1| 2| 2| 10| 5|false |false |false | 2|

| 4| 2| 1| 10| 5| 4|true |false |false | 19|

| 4| 3| 1| 5| 4| 8|false |false |false | 5|

| 4| 3| 2| 4| 8| 1|false |false |false | 4|

| 4| 4| 1| 8| 1| 10|false |false |false | 8|

| 4| 4| 2| 1| 10| 6|false |false |false | 1|

| 4| 5| 1| 10| 6| 3|true |false |false | 19|

| 4| 6| 1| 6| 3| 9|false |false |false | 6|

| 4| 6| 2| 3| 9| 0|false |false |false | 3|

| 4| 7| 1| 9| 0| 10|false |false |false | 9|

| 4| 7| 2| 0| 10| 7|false |false |false | 0|

| 4| 8| 1| 10| 7| 2|true |false |false | 19|

| 4| 9| 1| 7| 2| 10|false |false |false | 7|

| 4| 9| 2| 2| 10| 10|false |false |false | 2|

| 4| 10| 1| 10| 10| 8|true |false |true | 10|

| 4| 10| 2| 10| 8| 0|false |false |true | 10|

| 4| 10| 3| 8| 0| 0|false |false |true | 8|

That looks good. The scores are correct and the last frame is scored correctly.

We could simply `SUM` everything at this point:

```sql

WITH stats AS (

SELECT

*,

COALESCE(LEAD(pins, 1) OVER (w), 0) AS next_pins,

COALESCE(LEAD(pins, 2) OVER (w), 0) AS next_next_pins,

roll = 1 AND pins = 10 AS is_strike,

roll = 2 AND (pins + LAG(pins, 1) OVER (w) = 10) AS is_spare,

frame = (max(frame) OVER (PARTITION BY game)) AS is_last_frame

FROM game_rolls

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

),

scores AS (

SELECT

*,

CASE

WHEN is_last_frame THEN pins

WHEN is_strike THEN pins + next_pins + next_next_pins

WHEN is_spare THEN pins + next_pins

ELSE

pins

END

AS score

FROM stats

)

SELECT

game,

SUM(score)

FROM scores

GROUP BY game

;

```

**Results final `SUM`**:

|game|sum|

|----|---|

| 1|300|

| 2| 10|

| 3|100|

| 4|139|

Notice the use of CTEs again so we can build on the previous step. This is an important pattern that I follow

strictly when building up queries. It allows me to extract parts into views in the future and provides a good

place for meaningful names.

In Bowling, we normally to see a running score frame by frame. We can do this by adding a cumulative score to each frame with yet another Window Function.

```sql

WITH stats AS (

SELECT

*,

COALESCE(LEAD(pins, 1) OVER (w), 0) AS next_pins,

COALESCE(LEAD(pins, 2) OVER (w), 0) AS next_next_pins,

roll = 1 AND pins = 10 AS is_strike,

roll = 2 AND (pins + LAG(pins, 1) OVER (w) = 10) AS is_spare,

frame = (max(frame) OVER (PARTITION BY game)) AS is_last_frame

FROM game_rolls

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

),

scores AS (

SELECT

*,

CASE

WHEN is_last_frame THEN pins

WHEN is_strike THEN pins + next_pins + next_next_pins

WHEN is_spare THEN pins + next_pins

ELSE

pins

END

AS score

FROM stats

),

sums AS (

-- Note: This CTE will get optimized away by the query planner.

-- Nothing is calling it, and we can choose which to call in the final query.

SELECT

game,

SUM(score)

FROM scores

GROUP BY game

),

cumulative_scores AS (

SELECT

*,

SUM(score) OVER (w) AS cumulative_score,

CASE

WHEN is_strike THEN 'X'

WHEN is_spare THEN ' /'

ELSE REPEAT(' ', roll - 1) || pins

END display

FROM scores

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

)

SELECT

game,

frame,

display,

cumulative_score

FROM cumulative_scores

;

```

**Results Game 1**:

|game|frame|display|cumulative_score|

|----|-----|-------|----------------|

| 1| 1|X | 30|

| 1| 2|X | 60|

| 1| 3|X | 90|

| 1| 4|X | 120|

| 1| 5|X | 150|

| 1| 6|X | 180|

| 1| 7|X | 210|

| 1| 8|X | 240|

| 1| 9|X | 270|

| 1| 10|X | 280|

| 1| 10| 10 | 290|

| 1| 10| 10 | 300|

**Results Game 2**:

|game|frame|display|cumulative_score|

|----|-----|-------|----------------|

| 2| 1|1 | 1|

| 2| 1| 0 | 1|

| 2| 2|1 | 2|

| 2| 2| 0 | 2|

| 2| 3|1 | 3|

| 2| 3| 0 | 3|

| 2| 4|1 | 4|

| 2| 4| 0 | 4|

| 2| 5|1 | 5|

| 2| 5| 0 | 5|

| 2| 6|1 | 6|

| 2| 6| 0 | 6|

| 2| 7|1 | 7|

| 2| 7| 0 | 7|

| 2| 8|1 | 8|

| 2| 8| 0 | 8|

| 2| 9|1 | 9|

| 2| 9| 0 | 9|

| 2| 10|1 | 10|

| 2| 10| 0 | 10|

**Results Game 3**:

|game|frame|display|cumulative_score|

|----|-----|-------|----------------|

| 3| 1|0 | 0|

| 3| 1| / | 10|

| 3| 2|0 | 10|

| 3| 2| / | 20|

| 3| 3|0 | 20|

| 3| 3| / | 30|

| 3| 4|0 | 30|

| 3| 4| / | 40|

| 3| 5|0 | 40|

| 3| 5| / | 50|

| 3| 6|0 | 50|

| 3| 6| / | 60|

| 3| 7|0 | 60|

| 3| 7| / | 70|

| 3| 8|0 | 70|

| 3| 8| / | 80|

| 3| 9|0 | 80|

| 3| 9| / | 90|

| 3| 10|0 | 90|

| 3| 10| / | 100|

**Results Game 4**:

> I'm putting this in a preformatted text block so you can see the formatting. Markdown tables aren't doing

> this justice.

```

|game|frame|display|cumulative_score|

|----|-----|-------|----------------|

| 4| 1|7 | 7|

| 4| 1| 2 | 9|

| 4| 2|X | 28|

| 4| 3|5 | 33|

| 4| 3| 4 | 37|

| 4| 4|8 | 45|

| 4| 4| 1 | 46|

| 4| 5|X | 65|

| 4| 6|6 | 71|

| 4| 6| 3 | 74|

| 4| 7|9 | 83|

| 4| 7| 0 | 83|

| 4| 8|X | 102|

| 4| 9|7 | 109|

| 4| 9| 2 | 111|

| 4| 10|X | 121|

| 4| 10| 10 | 131|

| 4| 10| 8 | 139|

```

I narrowed the columns a little to make it easier to follow. In the final query, I would include all the columns

to let the client decide how to display it.

## Conclusion

If you wanted to use this using a Business Intelligence (BI) tool, you should create a view for each CTE and then query the final view.

Should bowling be calculated in SQL? I'll leave that up to you. It was a fun exercise to figure out the logic and I hope you enjoyed the ride!

---

# Karaoke

Date: 2024-09-24

URL: https://ddrscott.github.io/blog/2024/tts-comparision/

Tags: life

Description: What it's like when robots campaign for your Site Reliability position

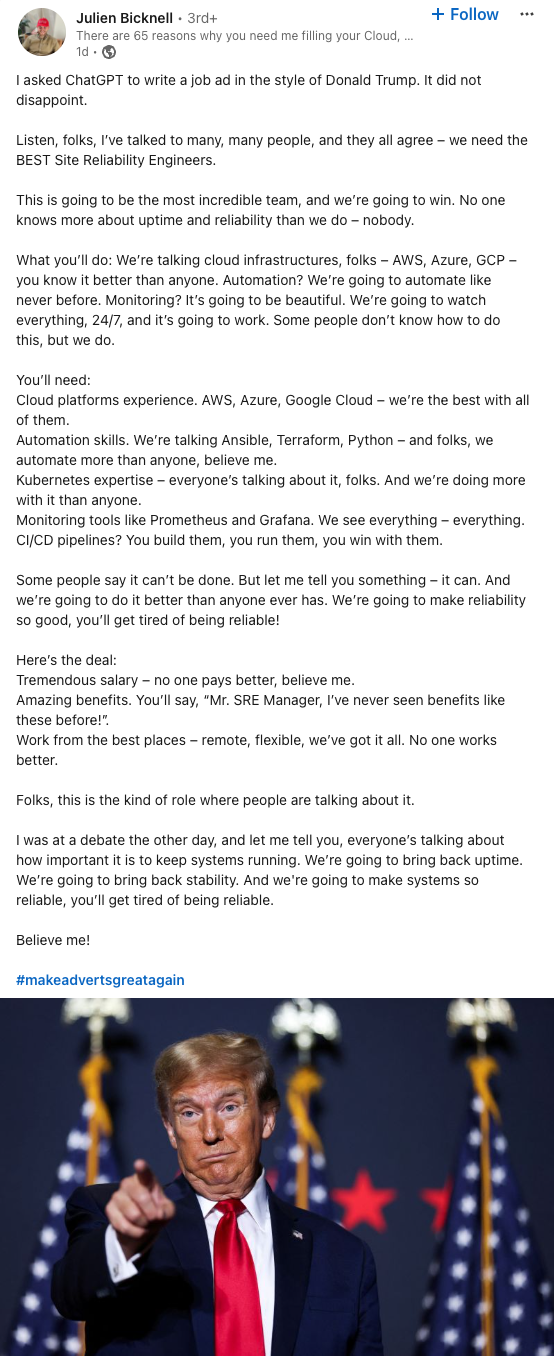

I've been doing a lot of comparisons between Text to Speech (TTS) engines recently and run into a this post

from [Julien Bicknell](https://www.linkedin.com/in/juliensydney) who had ChatGPT "write a job ad in the style of Donald

Trump". I couldn't resist passing the generated script through to the Text to Speech engines in front of me.

**WARNING**: This is NOT a comprehensive analysis.

I'm simply posting these recordings so I don't forget about them, and can listen to them in the future for a laugh. If someone wants a thorough analysis make me an offer. In the meantime, enjoy the robots trying to get an SRE job.

## Audio Outputs from Various Vendors

The command, `golumn /path/to/file.csv`, will open a GUI Window showing data in 0.1 seconds with a simple grid of data scrollable till my fingers get tired.

Overtime, I've added keyboard shortcuts to quick filter/unfilter data based on the current selection, sort columns, and search and filter any column for a piece of text, but at it's core, it's a sharp tool that does 1 thing well: **View CSV files**

## Open Source and Legacy

[Golumn](https://github.com/ddrscott/golumn) is open source. One day, someone other than myself can contribute to it. Someone other than myself could have the same impatience for CSV load times. Someone could have similar hotkey preferences. Or maybe not. I don't put it out there for fame or fortune. I but it out there because it's my favorite pet project and everyone should have a change to play with it. `Golumn` is buddy that has served me well for 7+ years, and I want it to have a home beyond me. History is made by sharing, not hoarding.

## Conclusion

Thanks for getting this far. I hope you find Golumn useful, and reach out to me if you have any questions or

suggestions. I'm always looking for ways to make it better.

---

# Bowling Scores the SQL Way

Date: 2024-10-09

URL: https://ddrscott.github.io/blog/2024/sql-bowling/

Tags: sql

Description: How to calculate bowling scores using SQL. No Store Procedures allowed!

The scoring rules for American Bowling are simple on paper, but can be a chore to calculate by hand. This is a fun exercise to calculate the scores in mass using SQL. Because if you can't do it in SQL, you're life is too easy!

If you don't know the rules, here's a quick summary: https://www.kidslearntobowl.com/how-to-keep-score/

## Data Preparation

Making a table to store the raw data for the individual games.

We're not going into full data modelling by adding players and timestamps. We're keeping it simple for this example.

```sql

DROP TABLE IF EXISTS game_rolls;

CREATE TABLE game_rolls (

game int,

frame int,

roll int,

pins int

);

```

### Add a Perfect Game

A perfect game is 12 strikes in a row. 10 strikes in the first 9 frames and 2 strikes in the 10th frame.

```sql

-- Perfect Game

-- Total: 300

INSERT INTO game_rolls (game, frame, roll, pins)

VALUES

(1, 1, 1, 10),

(1, 2, 1, 10),

(1, 3, 1, 10),

(1, 4, 1, 10),

(1, 5, 1, 10),

(1, 6, 1, 10),

(1, 7, 1, 10),

(1, 8, 1, 10),

(1, 9, 1, 10),

(1, 10, 1, 10),

(1, 10, 2, 10),

(1, 10, 3, 10);

```

### Add an Unlucky Second Game

We managed to knock down 1 pin each frame and missed the second roll. Repeat this for all 10 frames.

```sql

-- 1 pin each frame.

-- Total: 10

INSERT INTO game_rolls (game, frame, roll, pins)

VALUES

(2, 1, 1, 1),

(2, 1, 2, 0),

(2, 2, 1, 1),

(2, 2, 2, 0),

(2, 3, 1, 1),

(2, 3, 2, 0),

(2, 4, 1, 1),

(2, 4, 2, 0),

(2, 5, 1, 1),

(2, 5, 2, 0),

(2, 6, 1, 1),

(2, 6, 2, 0),

(2, 7, 1, 1),

(2, 7, 2, 0),

(2, 8, 1, 1),

(2, 8, 2, 0),

(2, 9, 1, 1),

(2, 9, 2, 0),

(2, 10, 1, 1),

(2, 10, 2, 0);

```

### Add Gutter Ball and Spare

Now, we're showing off our skills. Throw the first ball into the gutter on purpose and take down the rest in the second roll for a spare.

```sql

-- 0 then 10 each frame

-- Total: 100

INSERT INTO game_rolls (game, frame, roll, pins)

VALUES

(3, 1, 1, 0),

(3, 1, 2, 10),

(3, 2, 1, 0),

(3, 2, 2, 10),

(3, 3, 1, 0),

(3, 3, 2, 10),

(3, 4, 1, 0),

(3, 4, 2, 10),

(3, 5, 1, 0),

(3, 5, 2, 10),

(3, 6, 1, 0),

(3, 6, 2, 10),

(3, 7, 1, 0),

(3, 7, 2, 10),

(3, 8, 1, 0),

(3, 8, 2, 10),

(3, 9, 1, 0),

(3, 9, 2, 10),

(3, 10, 1, 0),

(3, 10, 2, 10);

```

### Add Realistic Game with Strikes and Spares

This is my typical night out, never quite getting halfway to perfection (300). If I spent more time bowling and less time writing SQL maybe I'd get better!

```sql

INSERT INTO game_rolls (game, frame, roll, pins)

VALUES

(4, 1, 1, 7), -- First frame: 7 pins

(4, 1, 2, 2), -- Spare with 2 pins => = 9

(4, 2, 1, 10), -- Second frame: Strike => 9+10+5+4=28

(4, 3, 1, 5), -- Third frame: 5 pins

(4, 3, 2, 4), -- Open frame with 4 pins => 28+9=37

(4, 4, 1, 8), -- Fourth frame: 8 pins

(4, 4, 2, 1), -- Open frame with 1 pin => 37+9=46

(4, 5, 1, 10), -- Fifth frame: Strike => 46+10+6+3=65

(4, 6, 1, 6), -- Sixth frame: 6 pins

(4, 6, 2, 3), -- Open frame with 3 pins => 65+9=74

(4, 7, 1, 9), -- Seventh frame: 9 pins

(4, 7, 2, 0), -- Open frame with 0 pins => 74+9=83

(4, 8, 1, 10), -- Eighth frame: Strike => 83+10+9=102

(4, 9, 1, 7), -- Ninth frame: 7 pins

(4, 9, 2, 2), -- Open frame with 2 pins => 102+9=111

(4, 10, 1, 10), -- Tenth frame: Strike

(4, 10, 2, 10), -- Bonus roll: Strike

(4, 10, 3, 8); -- Bonus roll: 8 pins => 111+10+10+8=139

```

## Query to Calculate Scores

There are many ways to calculate the scores.

We're going to make use of [Window Functions](https://www.postgresql.org/docs/current/tutorial-window.html) to look ahead for bonus pins and look behind for spares.

```sql

SELECT

*,

COALESCE(LEAD(pins, 1) OVER (w), 0) AS next_pins,

COALESCE(LEAD(pins, 2) OVER (w), 0) AS next_next_pins,

roll = 1 AND pins = 10 AS is_strike,

roll = 2 AND (pins + LAG(pins, 1) OVER (w) = 10) AS is_spare

FROM game_rolls

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

;

```

**Results**:

|game|frame|roll|pins|next_pins|next_next_pins|is_strike|is_spare|

|----|-----|----|----|---------|--------------|---------|--------|

| 4| 1| 1| 7| 2| 10|false |false |

| 4| 1| 2| 2| 10| 5|false |false |

| 4| 2| 1| 10| 5| 4|true |false |

| 4| 3| 1| 5| 4| 8|false |false |

| 4| 3| 2| 4| 8| 1|false |false |

| 4| 4| 1| 8| 1| 10|false |false |

| 4| 4| 2| 1| 10| 6|false |false |

| 4| 5| 1| 10| 6| 3|true |false |

| 4| 6| 1| 6| 3| 9|false |false |

| 4| 6| 2| 3| 9| 0|false |false |

| 4| 7| 1| 9| 0| 10|false |false |

| 4| 7| 2| 0| 10| 7|false |false |

| 4| 8| 1| 10| 7| 2|true |false |

| 4| 9| 1| 7| 2| 10|false |false |

| 4| 9| 2| 2| 10| 10|false |false |

| 4| 10| 1| 10| 10| 8|true |false |

| 4| 10| 2| 10| 8| 0|false |false |

| 4| 10| 3| 8| 0| 0|false |false |

That looks right. The future pins are correct and the strikes and spares are correctly identified.

If we wanted, we could make a view, but we're going to continue with the query by using [Common Table Expressions

(CTE)](https://www.postgresql.org/docs/current/queries-with.html).

We'll call the first CTE `stats` and build on it with the next CTE to calculate the bonus based on the rules of

strikes and spares. Strikes get the next two rolls and spares get the next roll.

There is a special condition for the last frame. We accept the pins as final since there are no future frames to

look at. We don't have to 'wait' to score the last frame. We can score it immediately.

> I have to admit, this was the hardest part to figure out and I was stuck on it for longer than I'd like to admit.

> The perfect game scenario was totalling 320 for the longest time! (Perfect games of 12 strikes in a row are 300 points, not 320)

```sql

WITH stats AS (

SELECT

*,

COALESCE(LEAD(pins, 1) OVER (w), 0) AS next_pins,

COALESCE(LEAD(pins, 2) OVER (w), 0) AS next_next_pins,

roll = 1 AND pins = 10 AS is_strike,

roll = 2 AND (pins + LAG(pins, 1) OVER (w) = 10) AS is_spare,

frame = (max(frame) OVER (PARTITION BY game)) AS is_last_frame

FROM game_rolls

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

)

SELECT

*,

CASE

WHEN is_last_frame THEN pins

WHEN is_strike THEN pins + next_pins + next_next_pins

WHEN is_spare THEN pins + next_pins

ELSE

pins

END

AS score

FROM stats

;

```

**Results with pins per frame**:

|game|frame|roll|pins|next_pins|next_next_pins|is_strike|is_spare|is_last_frame|score|

|----|-----|----|----|---------|--------------|---------|--------|-------------|-----|

| 4| 1| 1| 7| 2| 10|false |false |false | 7|

| 4| 1| 2| 2| 10| 5|false |false |false | 2|

| 4| 2| 1| 10| 5| 4|true |false |false | 19|

| 4| 3| 1| 5| 4| 8|false |false |false | 5|

| 4| 3| 2| 4| 8| 1|false |false |false | 4|

| 4| 4| 1| 8| 1| 10|false |false |false | 8|

| 4| 4| 2| 1| 10| 6|false |false |false | 1|

| 4| 5| 1| 10| 6| 3|true |false |false | 19|

| 4| 6| 1| 6| 3| 9|false |false |false | 6|

| 4| 6| 2| 3| 9| 0|false |false |false | 3|

| 4| 7| 1| 9| 0| 10|false |false |false | 9|

| 4| 7| 2| 0| 10| 7|false |false |false | 0|

| 4| 8| 1| 10| 7| 2|true |false |false | 19|

| 4| 9| 1| 7| 2| 10|false |false |false | 7|

| 4| 9| 2| 2| 10| 10|false |false |false | 2|

| 4| 10| 1| 10| 10| 8|true |false |true | 10|

| 4| 10| 2| 10| 8| 0|false |false |true | 10|

| 4| 10| 3| 8| 0| 0|false |false |true | 8|

That looks good. The scores are correct and the last frame is scored correctly.

We could simply `SUM` everything at this point:

```sql

WITH stats AS (

SELECT

*,

COALESCE(LEAD(pins, 1) OVER (w), 0) AS next_pins,

COALESCE(LEAD(pins, 2) OVER (w), 0) AS next_next_pins,

roll = 1 AND pins = 10 AS is_strike,

roll = 2 AND (pins + LAG(pins, 1) OVER (w) = 10) AS is_spare,

frame = (max(frame) OVER (PARTITION BY game)) AS is_last_frame

FROM game_rolls

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

),

scores AS (

SELECT

*,

CASE

WHEN is_last_frame THEN pins

WHEN is_strike THEN pins + next_pins + next_next_pins

WHEN is_spare THEN pins + next_pins

ELSE

pins

END

AS score

FROM stats

)

SELECT

game,

SUM(score)

FROM scores

GROUP BY game

;

```

**Results final `SUM`**:

|game|sum|

|----|---|

| 1|300|

| 2| 10|

| 3|100|

| 4|139|

Notice the use of CTEs again so we can build on the previous step. This is an important pattern that I follow

strictly when building up queries. It allows me to extract parts into views in the future and provides a good

place for meaningful names.

In Bowling, we normally to see a running score frame by frame. We can do this by adding a cumulative score to each frame with yet another Window Function.

```sql

WITH stats AS (

SELECT

*,

COALESCE(LEAD(pins, 1) OVER (w), 0) AS next_pins,

COALESCE(LEAD(pins, 2) OVER (w), 0) AS next_next_pins,

roll = 1 AND pins = 10 AS is_strike,

roll = 2 AND (pins + LAG(pins, 1) OVER (w) = 10) AS is_spare,

frame = (max(frame) OVER (PARTITION BY game)) AS is_last_frame

FROM game_rolls

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

),

scores AS (

SELECT

*,

CASE

WHEN is_last_frame THEN pins

WHEN is_strike THEN pins + next_pins + next_next_pins

WHEN is_spare THEN pins + next_pins

ELSE

pins

END

AS score

FROM stats

),

sums AS (

-- Note: This CTE will get optimized away by the query planner.

-- Nothing is calling it, and we can choose which to call in the final query.

SELECT

game,

SUM(score)

FROM scores

GROUP BY game

),

cumulative_scores AS (

SELECT

*,

SUM(score) OVER (w) AS cumulative_score,

CASE

WHEN is_strike THEN 'X'

WHEN is_spare THEN ' /'

ELSE REPEAT(' ', roll - 1) || pins

END display

FROM scores

WINDOW w AS (PARTITION BY game ORDER BY game, frame, roll)

)

SELECT

game,

frame,

display,

cumulative_score

FROM cumulative_scores

;

```

**Results Game 1**:

|game|frame|display|cumulative_score|

|----|-----|-------|----------------|

| 1| 1|X | 30|

| 1| 2|X | 60|

| 1| 3|X | 90|

| 1| 4|X | 120|

| 1| 5|X | 150|

| 1| 6|X | 180|

| 1| 7|X | 210|

| 1| 8|X | 240|

| 1| 9|X | 270|

| 1| 10|X | 280|

| 1| 10| 10 | 290|

| 1| 10| 10 | 300|

**Results Game 2**:

|game|frame|display|cumulative_score|

|----|-----|-------|----------------|

| 2| 1|1 | 1|

| 2| 1| 0 | 1|

| 2| 2|1 | 2|

| 2| 2| 0 | 2|

| 2| 3|1 | 3|

| 2| 3| 0 | 3|

| 2| 4|1 | 4|

| 2| 4| 0 | 4|

| 2| 5|1 | 5|

| 2| 5| 0 | 5|

| 2| 6|1 | 6|

| 2| 6| 0 | 6|

| 2| 7|1 | 7|

| 2| 7| 0 | 7|

| 2| 8|1 | 8|

| 2| 8| 0 | 8|

| 2| 9|1 | 9|

| 2| 9| 0 | 9|

| 2| 10|1 | 10|

| 2| 10| 0 | 10|

**Results Game 3**:

|game|frame|display|cumulative_score|

|----|-----|-------|----------------|

| 3| 1|0 | 0|

| 3| 1| / | 10|

| 3| 2|0 | 10|

| 3| 2| / | 20|

| 3| 3|0 | 20|

| 3| 3| / | 30|

| 3| 4|0 | 30|

| 3| 4| / | 40|

| 3| 5|0 | 40|

| 3| 5| / | 50|

| 3| 6|0 | 50|

| 3| 6| / | 60|

| 3| 7|0 | 60|

| 3| 7| / | 70|

| 3| 8|0 | 70|

| 3| 8| / | 80|

| 3| 9|0 | 80|

| 3| 9| / | 90|

| 3| 10|0 | 90|

| 3| 10| / | 100|

**Results Game 4**:

> I'm putting this in a preformatted text block so you can see the formatting. Markdown tables aren't doing

> this justice.

```

|game|frame|display|cumulative_score|

|----|-----|-------|----------------|

| 4| 1|7 | 7|

| 4| 1| 2 | 9|

| 4| 2|X | 28|

| 4| 3|5 | 33|

| 4| 3| 4 | 37|

| 4| 4|8 | 45|

| 4| 4| 1 | 46|

| 4| 5|X | 65|

| 4| 6|6 | 71|

| 4| 6| 3 | 74|

| 4| 7|9 | 83|

| 4| 7| 0 | 83|

| 4| 8|X | 102|

| 4| 9|7 | 109|

| 4| 9| 2 | 111|

| 4| 10|X | 121|

| 4| 10| 10 | 131|

| 4| 10| 8 | 139|

```

I narrowed the columns a little to make it easier to follow. In the final query, I would include all the columns

to let the client decide how to display it.

## Conclusion

If you wanted to use this using a Business Intelligence (BI) tool, you should create a view for each CTE and then query the final view.

Should bowling be calculated in SQL? I'll leave that up to you. It was a fun exercise to figure out the logic and I hope you enjoyed the ride!

---

# Karaoke

Date: 2024-09-24

URL: https://ddrscott.github.io/blog/2024/tts-comparision/

Tags: life

Description: What it's like when robots campaign for your Site Reliability position

I've been doing a lot of comparisons between Text to Speech (TTS) engines recently and run into a this post

from [Julien Bicknell](https://www.linkedin.com/in/juliensydney) who had ChatGPT "write a job ad in the style of Donald

Trump". I couldn't resist passing the generated script through to the Text to Speech engines in front of me.

**WARNING**: This is NOT a comprehensive analysis.

I'm simply posting these recordings so I don't forget about them, and can listen to them in the future for a laugh. If someone wants a thorough analysis make me an offer. In the meantime, enjoy the robots trying to get an SRE job.

## Audio Outputs from Various Vendors

| Open AI - Nova | |

| Google Vertex AI - Casual K | |

| Google Vertex AI - Journey-O | |

| Play.HT - Play v3 | |

| Microsoft Copilot |

> Listen, folks, I’ve talked to many, many people, and they all agree – we need the BEST Site Reliability Engineers.

>

> This is going to be the most incredible team, and we’re going to win. No one knows more about uptime and reliability than we do – nobody.

>

> What you’ll do: We’re talking cloud infrastructures, folks – AWS, Azure, GCP – you know it better than anyone. Automation? We’re going to automate like never before. Monitoring? It’s going to be beautiful. We’re going to watch everything, 24/7, and it’s going to work. Some people don’t know how to do this, but we do.

>

> You’ll need:

> Cloud platforms experience. AWS, Azure, Google Cloud – we’re the best with all of them.

> Automation skills. We’re talking Ansible, Terraform, Python – and folks, we automate more than anyone, believe me.

> Kubernetes expertise – everyone’s talking about it, folks. And we’re doing more with it than anyone.

> Monitoring tools like Prometheus and Grafana. We see everything – everything.

> CI/CD pipelines? You build them, you run them, you win with them.

>

> Some people say it can’t be done. But let me tell you something – it can. And we’re going to do it better than anyone ever has. We’re going to make reliability so good, you’ll get tired of being reliable!

>

> Here’s the deal:

> Tremendous salary – no one pays better, believe me.

> Amazing benefits. You’ll say, “Mr. SRE Manager, I’ve never seen benefits like these before!”.

> Work from the best places – remote, flexible, we’ve got it all. No one works better.

>

> Folks, this is the kind of role where people are talking about it.

>

> I was at a debate the other day, and let me tell you, everyone’s talking about how important it is to keep systems running. We’re going to bring back uptime. We’re going to bring back stability. And we're going to make systems so reliable, you’ll get tired of being reliable.

>

> Believe me!

>

---

# Karaoke

Date: 2024-09-23

URL: https://ddrscott.github.io/blog/2024/karaoke-podcast/

Tags: life

Description: Surprisingly Good AI Generated Podcast Summary of Karaoke Artcle

This podcast was created using [Google's Notebook LM](https://notebooklm.google.com)

I feed in my Karaoke Article and this was the results.

[Audio File](https://cdn.dataturd.com/audio/karaoke-article.mp3)

## Feedback

### Pros

- The speakers are lively and engaging.

- Interesting conclusions

- Good story telling

- Unique perspective on the original article, which provides some feedback to me, the author, for how my readers could interpret the post.

### Cons

- Misinterpreted the complications I had with the "middle" channel

- Wrong conclusion about the contest. I did share he got a silver medal.

## Text Transcription (provided by [AssemblyAI](https://www.assemblyai.com))

**Alex**: Ever been listening to music and think, man, I wish I could take the lead singer out of this so I could sing it at karaoke night.

**Pat**: Happens all the time.

**Alex**: Well, that's what we're diving into today. We found this blog post by a self proclaimed, get this, recovering ten x developer, okay. Who used his coding powers to, well, take karaoke night to a whole new level.

**Pat**: Wow.

**Alex**: Helping his son prep for a singing competition using AI. The blog post is called Karaoke y. Scott. W h y. Catchy.

**Pat**: I like it.

**Alex**: So Scott son has this competition. They need to isolate vocals from these pre recorded tracks.

**Pat**: Okay.

**Alex**: And, I mean, I guess most people would just try to find a karaoke version, right?

**Pat**: Or use some audio software, you know, try to minimize the vocals.

**Alex**: Yeah, just like reduce the vocal track or something.

**Pat**: Exactly.

**Alex**: But not Scott. This guy goes all out.

**Pat**: Oh, I see this coming. I can tell.

**Alex**: He's like, if it's worth doing, it's worth overdoing.

**Pat**: Love it.

**Alex**: Which I thought was hilarious. So he decides to, you know what? I am going to build a whole karaoke system from scratch using AI, of course.

**Pat**: Of course. Why not? Why not?

**Alex**: And it's interesting because it is a good example of why those simpler solutions, they don't always work, especially for something. If you want to be serious about it, if you have a vocalist who actually wants to use this to train.

**Pat**: Exactly. And those traditional methods, they kind of rely on just removing certain frequencies, which for casual singing along, it's fine. But if you really want to hear yourself, it just makes everything muddy. It's not good.

**Alex**: So Scott jumps into this whole world of AI music source separation with this tool called splitter. Have you heard of this?

**Pat**: Yeah. So spleeter uses a neural network, basically, it's trained on tons and tons of songs to learn to identify and separate all the different parts of the music.

**Alex**: Okay.

**Pat**: To vocals, instruments, even drums.

**Alex**: Wow. So we're talking about Scott, who's a coder, not like an audio engineer or anything, right? Diving into collab, notebooks, APIs, static site building, all to just, you know, wow, give his son a shot.

**Pat**: That's the part I think is so fascinating. Right?

**Alex**: Yeah.

**Pat**: Not even just the tech, but that he was willing to just go for it. Like it's such a specific and kind of personal goal.

**Alex**: Totally.

**Pat**: I don't know, it just shows you how accessible these tools are becoming. That anyone can use them.

**Alex**: Yeah. And that you can use them for such, you know, exactly. Weird, specific things.

**Pat**: Totally.

**Alex**: Okay. But like, anything involving AI, it's not.

**Pat**: Always, you know, it's not gonna be easy.

**Alex**: Smooth sailing. And Scott's actually really honest about, like, the challenges he ran into.

**Pat**: Of course. Yeah. I mean, it can be amazing, this AI stuff, but it still needs that human touch.

**Alex**: Yeah.

**Pat**: You know, especially if you want something that sounds really polished.

**Alex**: Right. It can't do everything for you.

**Pat**: Not yet, at least.

**Alex**: Yeah. One of the things that tripped him up was this whole thing, this middle channel.

**Pat**: Oh, yeah.

**Alex**: What is that? And why did it, like, totally derail his whole AI karaoke machine?

**Pat**: Okay, so the middle channel. Imagine listening to a song, right? Like, all the instruments and the vocals, they're kind of laid out in front of you, almost like a 3d map of the sound.

**Alex**: Okay.

**Pat**: And the middle channel is that center part. It's where you'll find a lot of the stuff that blends everything together.

**Alex**: Okay.

**Pat**: It's not just about, like, panning things to the center. It's reverb vocal harmonies, instruments that are kind of meant to glue the whole mix together.

**Alex**: I'm picturing it.

**Pat**: You might not really notice them on their own, but they really add to the overall feel. And that's where these AI tools, like splitters, that's where they can sometimes struggle. Cause it all gets a little intertwined with the main vocals in that middle channel.

**Alex**: So it's like you baked a cake, and now you're trying to take out the sugar.

**Pat**: Yeah, exactly. Good luck with that.

**Alex**: Yep.

**Pat**: So that's what happened with Scott. The AI was doing its thing, doing great, but it really needed a human to come in and fine tune to make sure those vocals were, you know, crystal clear. Especially for a competition.

**Alex**: Yeah, for a competition, you need it to be perfect.

**Pat**: Right.

**Alex**: And he actually says in the blog that the final product was, like, 80% AI generated. 20% human stitched.

**Pat**: Yeah.

**Alex**: Which, I don't know, that just seems like AI these days, you know?

**Pat**: Totally.

**Alex**: It can do a lot, but it still needs us.

**Pat**: Yeah. It's like it can do the heavy lifting, but we still need to, you know, kind of guide it.

**Alex**: It's like when I'm prepping a presentation.

**Pat**: Okay.

**Alex**: Like, I might use AI to help me get my thoughts down.

**Pat**: Yeah.

**Alex**: Maybe even design some slides or whatever. But it's still me, you know? It's my voice.

**Pat**: Exactly.

**Alex**: My perspective that makes it all work together.

**Pat**: It's that human element, not just in the final product, but in the whole process.

**Alex**: Yeah.

**Pat**: Think about Scott. I mean, diving into all that new tech. He wouldn't have learned all that if he just downloaded a karaoke track.

**Alex**: That's a really good point. So, okay, all this tech talk, did it actually work? Did his son nail the audition? Was it a success?

**Pat**: Well, that's the thing. He doesn't actually say if he won or not.

**Alex**: Oh, really?

**Pat**: He kind of leaves us hanging, so.

**Alex**: We don't know if it was a standing. After all that work, he really focuses.

**Pat**: More on the journey, what he learned along the way.

**Alex**: Interesting.

**Pat**: I think it says a lot about how the project was about more than just winning. It was about supporting his kid, doing something cool with technology, maybe learning something new himself.

**Alex**: Yeah, yeah, for sure.

**Pat**: And speaking of which, his big takeaway from all this is 99% practice, 1% technology, which I love, because it's so easy to get caught up in the fancy new thing.

**Alex**: Totally.

**Pat**: But at the end of the day, whether you're singing or coding or, you know, podcasting, it's all about putting in the time, the work.

**Alex**: He even jokes that next time, instead of building a whole karaoke system, he'll just sneak into his son's teacher's suitcase and record practice directly. Well, there you go, hiding in a suitcase. I mean, talk about extreme lengths for your kid's karaoke career.

**Pat**: That's dedication for you. Maybe a little over the top.

**Alex**: He's got a good sense of humor about it, though.

**Pat**: Definitely.

**Alex**: Yeah.

**Pat**: But it's a cool story, right?

**Alex**: Yeah, it really shows how technology can bring families together. You know, like how many dads would build a whole AI karaoke system just for their kid? It's pretty sweet.

**Pat**: Totally. And it's easy to focus on the big, impressive AI stuff, but I think it's these smaller stories that are more interesting.

**Alex**: Yeah.

**Pat**: More personal.

**Alex**: It's not even just about the end result, you know?

**Pat**: No, exactly.

**Alex**: Like all that learning and problem solving, the whole process can be just as important.

**Pat**: I think it goes back to what we were saying about AI and human input, that 80 20 split. AI isn't going to replace us. It's more like it helps us do things we couldn't do before.

**Alex**: And it's like Scott said, 99% practice, 1% technology. You still got to put in a work.

**Pat**: It's a good reminder for all of us.

**Alex**: It is. So I think this deep dive taught us a lot more than how to make a karaoke track. It's about using technology in creative ways.

**Pat**: Definitely, and not being afraid to experiment, even if it seems impossible.

**Alex**: And who knows? Maybe it even inspired some people listening to, I don't know, dust off their old karaoke machines or maybe build their own or build their own. There you go.

**Pat**: That's a wrap.

---

# Karaoke

Date: 2024-09-19

URL: https://ddrscott.github.io/blog/2024/karaoke/

Tags: life

Description: How to leverage technology to win at singing!

TL;DR - Karaoke!

My 10-year-old had a singing competition coming up in 2 weeks. He had two songs to perfect, and one was in Latin. His teacher provided an audio recording of herself performing to use as a reference when she wasn't around. The recording was good enough, but I thought we could do better. Karaoke!

Traditional karaoke machines perform brute force audio filtering by clipping middle frequencies, clipping identical frequencies found in the left and right channel, or removing the center channel, but that's not good enough for my son. I need AI magic!

> If it's worth doing, it's worth overdoing!

> - Me

## Get It Done!

1. **Get a recording with accompaniment of each song.** ✅ (His teacher sent this before the project started)

2. **Find an open-source project that uses AI to segment music files.** [Spleeter](https://github.com/deezer/spleeter) ✅

3. **Create a notebook in Google Colab to take the library out for a spin.** ✅

4. **Glue the separate wav files back together into a single MP3 file.** ✅

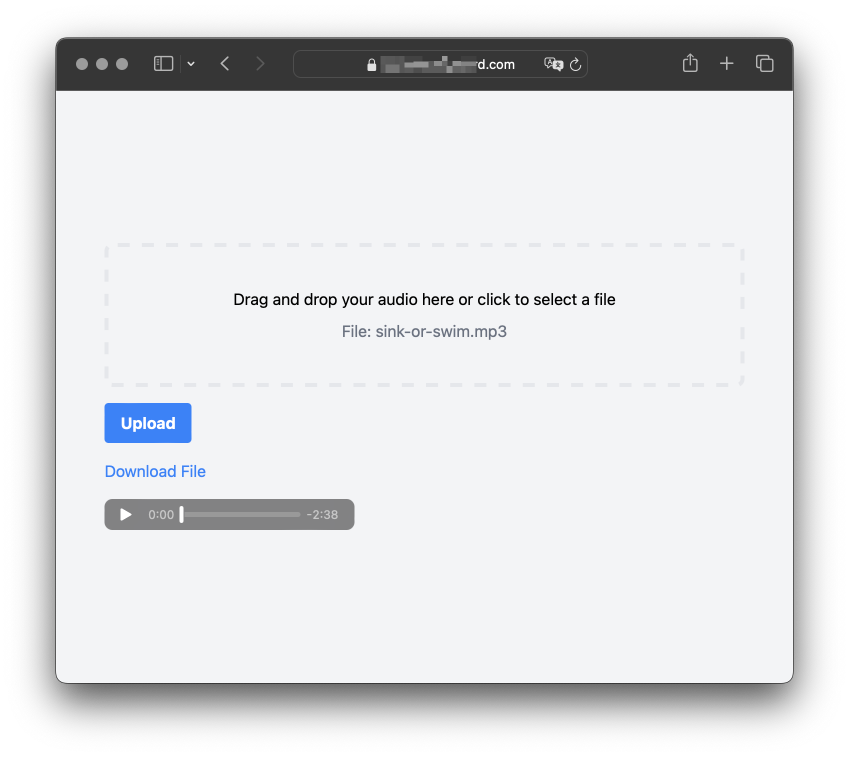

5. **Create an API for the project.** (Screenshot below. I can't publish it since it costs $$$) ✅

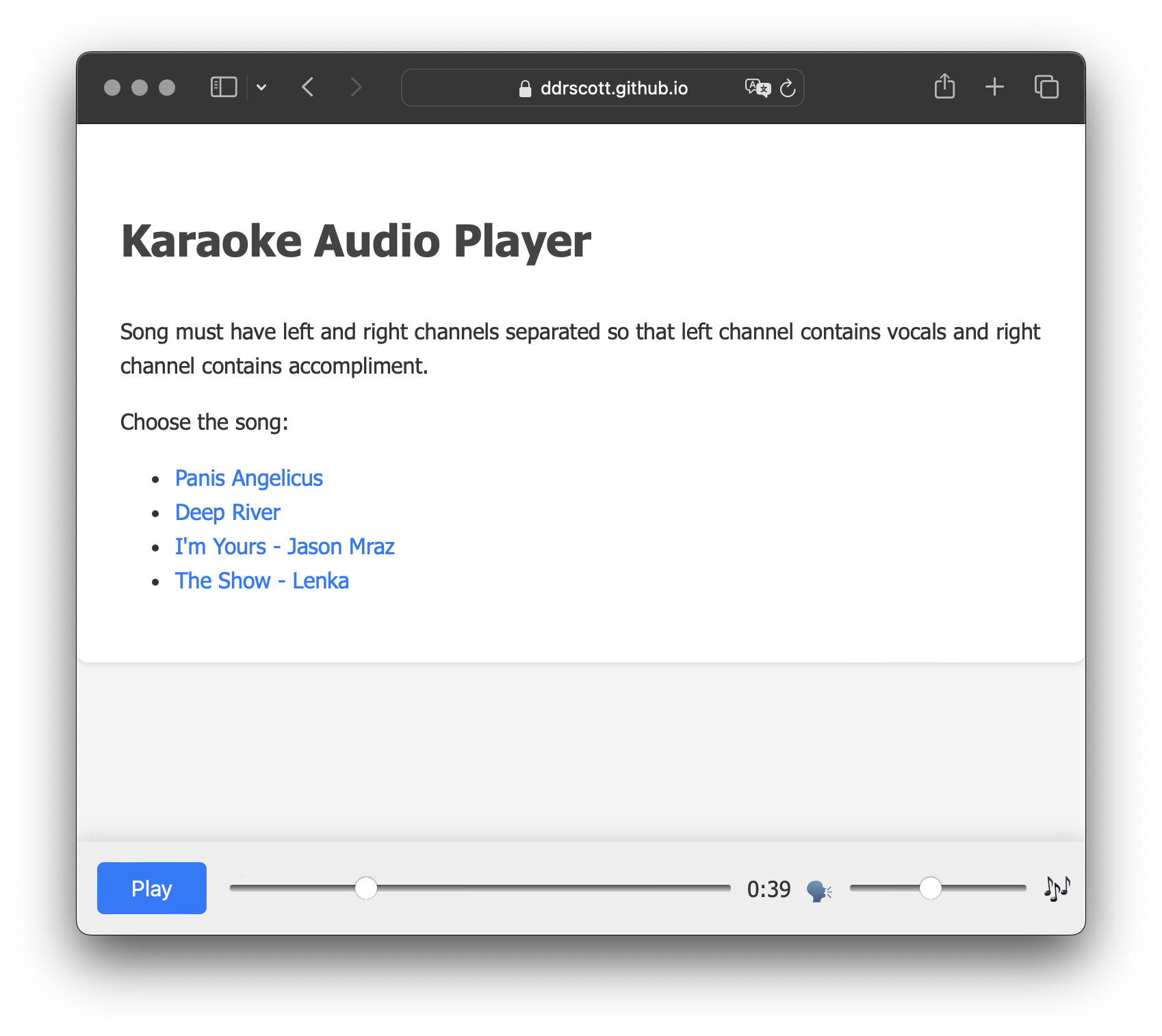

6. **Create a [static site audio player](https://ddrscott.github.io/prompt-games/singing) that allows my son to mix in or out vocals as needed.** ✅

7. **PRACTICE!**

## Lessons Learned

The whole project stemmed from the thought: What can AI do for me? It taught me about the middle channel and filter

technique. It tried its best to implement some Python code using [librosa](https://librosa.org/doc/latest/index.html), but the output was never satisfactory. A simple web search discovered the [Spleeter project](https://github.com/deezer/spleeter), and ChatGPT was fairly good at implementing some snippets I could copy/paste.

I was impressed with its implementation of the JavaScript visualization in the audio player. It used [Flexbox](https://developer.mozilla.org/en-US/docs/Web/CSS/CSS_flexible_box_layout) predictably, but I had to interject to get the player on the bottom as a different component. The audio slider to mix the vocals and accompaniment was not trivial, and AI failed many, many times. I ultimately had to read the JS Audio API to figure out the nuances.

Overall, it was probably 80% AI-generated and 20% human-stitched and corrected. Time-wise, I think it would have been a wash regardless of AI. AI forced me to spend time troubleshooting unfamiliar code vs. traditionally reading unfamiliar docs.

- **Useful:** 5 of 5

- **Productizable:** 3 of 5

- **Bug-Free:** 2 of 5

- **Fun:** 5 of 5

## Screenshots

### Frontend for audio splitter

_I can't provide the URL since it costs $$$ to keep it alive_

> Listen, folks, I’ve talked to many, many people, and they all agree – we need the BEST Site Reliability Engineers.

>

> This is going to be the most incredible team, and we’re going to win. No one knows more about uptime and reliability than we do – nobody.

>

> What you’ll do: We’re talking cloud infrastructures, folks – AWS, Azure, GCP – you know it better than anyone. Automation? We’re going to automate like never before. Monitoring? It’s going to be beautiful. We’re going to watch everything, 24/7, and it’s going to work. Some people don’t know how to do this, but we do.

>

> You’ll need:

> Cloud platforms experience. AWS, Azure, Google Cloud – we’re the best with all of them.

> Automation skills. We’re talking Ansible, Terraform, Python – and folks, we automate more than anyone, believe me.

> Kubernetes expertise – everyone’s talking about it, folks. And we’re doing more with it than anyone.

> Monitoring tools like Prometheus and Grafana. We see everything – everything.

> CI/CD pipelines? You build them, you run them, you win with them.

>

> Some people say it can’t be done. But let me tell you something – it can. And we’re going to do it better than anyone ever has. We’re going to make reliability so good, you’ll get tired of being reliable!

>

> Here’s the deal:

> Tremendous salary – no one pays better, believe me.

> Amazing benefits. You’ll say, “Mr. SRE Manager, I’ve never seen benefits like these before!”.

> Work from the best places – remote, flexible, we’ve got it all. No one works better.

>

> Folks, this is the kind of role where people are talking about it.

>

> I was at a debate the other day, and let me tell you, everyone’s talking about how important it is to keep systems running. We’re going to bring back uptime. We’re going to bring back stability. And we're going to make systems so reliable, you’ll get tired of being reliable.

>

> Believe me!

>

---

# Karaoke

Date: 2024-09-23

URL: https://ddrscott.github.io/blog/2024/karaoke-podcast/

Tags: life

Description: Surprisingly Good AI Generated Podcast Summary of Karaoke Artcle

This podcast was created using [Google's Notebook LM](https://notebooklm.google.com)

I feed in my Karaoke Article and this was the results.

[Audio File](https://cdn.dataturd.com/audio/karaoke-article.mp3)

## Feedback

### Pros

- The speakers are lively and engaging.

- Interesting conclusions

- Good story telling

- Unique perspective on the original article, which provides some feedback to me, the author, for how my readers could interpret the post.

### Cons

- Misinterpreted the complications I had with the "middle" channel

- Wrong conclusion about the contest. I did share he got a silver medal.

## Text Transcription (provided by [AssemblyAI](https://www.assemblyai.com))

**Alex**: Ever been listening to music and think, man, I wish I could take the lead singer out of this so I could sing it at karaoke night.

**Pat**: Happens all the time.

**Alex**: Well, that's what we're diving into today. We found this blog post by a self proclaimed, get this, recovering ten x developer, okay. Who used his coding powers to, well, take karaoke night to a whole new level.

**Pat**: Wow.

**Alex**: Helping his son prep for a singing competition using AI. The blog post is called Karaoke y. Scott. W h y. Catchy.

**Pat**: I like it.

**Alex**: So Scott son has this competition. They need to isolate vocals from these pre recorded tracks.

**Pat**: Okay.

**Alex**: And, I mean, I guess most people would just try to find a karaoke version, right?

**Pat**: Or use some audio software, you know, try to minimize the vocals.

**Alex**: Yeah, just like reduce the vocal track or something.

**Pat**: Exactly.

**Alex**: But not Scott. This guy goes all out.

**Pat**: Oh, I see this coming. I can tell.

**Alex**: He's like, if it's worth doing, it's worth overdoing.

**Pat**: Love it.

**Alex**: Which I thought was hilarious. So he decides to, you know what? I am going to build a whole karaoke system from scratch using AI, of course.

**Pat**: Of course. Why not? Why not?

**Alex**: And it's interesting because it is a good example of why those simpler solutions, they don't always work, especially for something. If you want to be serious about it, if you have a vocalist who actually wants to use this to train.

**Pat**: Exactly. And those traditional methods, they kind of rely on just removing certain frequencies, which for casual singing along, it's fine. But if you really want to hear yourself, it just makes everything muddy. It's not good.

**Alex**: So Scott jumps into this whole world of AI music source separation with this tool called splitter. Have you heard of this?

**Pat**: Yeah. So spleeter uses a neural network, basically, it's trained on tons and tons of songs to learn to identify and separate all the different parts of the music.

**Alex**: Okay.

**Pat**: To vocals, instruments, even drums.

**Alex**: Wow. So we're talking about Scott, who's a coder, not like an audio engineer or anything, right? Diving into collab, notebooks, APIs, static site building, all to just, you know, wow, give his son a shot.

**Pat**: That's the part I think is so fascinating. Right?

**Alex**: Yeah.

**Pat**: Not even just the tech, but that he was willing to just go for it. Like it's such a specific and kind of personal goal.

**Alex**: Totally.

**Pat**: I don't know, it just shows you how accessible these tools are becoming. That anyone can use them.

**Alex**: Yeah. And that you can use them for such, you know, exactly. Weird, specific things.

**Pat**: Totally.

**Alex**: Okay. But like, anything involving AI, it's not.

**Pat**: Always, you know, it's not gonna be easy.

**Alex**: Smooth sailing. And Scott's actually really honest about, like, the challenges he ran into.

**Pat**: Of course. Yeah. I mean, it can be amazing, this AI stuff, but it still needs that human touch.

**Alex**: Yeah.

**Pat**: You know, especially if you want something that sounds really polished.

**Alex**: Right. It can't do everything for you.

**Pat**: Not yet, at least.

**Alex**: Yeah. One of the things that tripped him up was this whole thing, this middle channel.

**Pat**: Oh, yeah.

**Alex**: What is that? And why did it, like, totally derail his whole AI karaoke machine?

**Pat**: Okay, so the middle channel. Imagine listening to a song, right? Like, all the instruments and the vocals, they're kind of laid out in front of you, almost like a 3d map of the sound.

**Alex**: Okay.

**Pat**: And the middle channel is that center part. It's where you'll find a lot of the stuff that blends everything together.

**Alex**: Okay.

**Pat**: It's not just about, like, panning things to the center. It's reverb vocal harmonies, instruments that are kind of meant to glue the whole mix together.

**Alex**: I'm picturing it.

**Pat**: You might not really notice them on their own, but they really add to the overall feel. And that's where these AI tools, like splitters, that's where they can sometimes struggle. Cause it all gets a little intertwined with the main vocals in that middle channel.

**Alex**: So it's like you baked a cake, and now you're trying to take out the sugar.

**Pat**: Yeah, exactly. Good luck with that.

**Alex**: Yep.

**Pat**: So that's what happened with Scott. The AI was doing its thing, doing great, but it really needed a human to come in and fine tune to make sure those vocals were, you know, crystal clear. Especially for a competition.

**Alex**: Yeah, for a competition, you need it to be perfect.

**Pat**: Right.

**Alex**: And he actually says in the blog that the final product was, like, 80% AI generated. 20% human stitched.

**Pat**: Yeah.

**Alex**: Which, I don't know, that just seems like AI these days, you know?

**Pat**: Totally.

**Alex**: It can do a lot, but it still needs us.

**Pat**: Yeah. It's like it can do the heavy lifting, but we still need to, you know, kind of guide it.

**Alex**: It's like when I'm prepping a presentation.

**Pat**: Okay.

**Alex**: Like, I might use AI to help me get my thoughts down.

**Pat**: Yeah.

**Alex**: Maybe even design some slides or whatever. But it's still me, you know? It's my voice.

**Pat**: Exactly.

**Alex**: My perspective that makes it all work together.

**Pat**: It's that human element, not just in the final product, but in the whole process.

**Alex**: Yeah.

**Pat**: Think about Scott. I mean, diving into all that new tech. He wouldn't have learned all that if he just downloaded a karaoke track.

**Alex**: That's a really good point. So, okay, all this tech talk, did it actually work? Did his son nail the audition? Was it a success?

**Pat**: Well, that's the thing. He doesn't actually say if he won or not.

**Alex**: Oh, really?

**Pat**: He kind of leaves us hanging, so.

**Alex**: We don't know if it was a standing. After all that work, he really focuses.

**Pat**: More on the journey, what he learned along the way.

**Alex**: Interesting.

**Pat**: I think it says a lot about how the project was about more than just winning. It was about supporting his kid, doing something cool with technology, maybe learning something new himself.

**Alex**: Yeah, yeah, for sure.

**Pat**: And speaking of which, his big takeaway from all this is 99% practice, 1% technology, which I love, because it's so easy to get caught up in the fancy new thing.

**Alex**: Totally.

**Pat**: But at the end of the day, whether you're singing or coding or, you know, podcasting, it's all about putting in the time, the work.

**Alex**: He even jokes that next time, instead of building a whole karaoke system, he'll just sneak into his son's teacher's suitcase and record practice directly. Well, there you go, hiding in a suitcase. I mean, talk about extreme lengths for your kid's karaoke career.

**Pat**: That's dedication for you. Maybe a little over the top.

**Alex**: He's got a good sense of humor about it, though.

**Pat**: Definitely.

**Alex**: Yeah.

**Pat**: But it's a cool story, right?

**Alex**: Yeah, it really shows how technology can bring families together. You know, like how many dads would build a whole AI karaoke system just for their kid? It's pretty sweet.

**Pat**: Totally. And it's easy to focus on the big, impressive AI stuff, but I think it's these smaller stories that are more interesting.

**Alex**: Yeah.

**Pat**: More personal.

**Alex**: It's not even just about the end result, you know?

**Pat**: No, exactly.

**Alex**: Like all that learning and problem solving, the whole process can be just as important.

**Pat**: I think it goes back to what we were saying about AI and human input, that 80 20 split. AI isn't going to replace us. It's more like it helps us do things we couldn't do before.

**Alex**: And it's like Scott said, 99% practice, 1% technology. You still got to put in a work.

**Pat**: It's a good reminder for all of us.

**Alex**: It is. So I think this deep dive taught us a lot more than how to make a karaoke track. It's about using technology in creative ways.

**Pat**: Definitely, and not being afraid to experiment, even if it seems impossible.

**Alex**: And who knows? Maybe it even inspired some people listening to, I don't know, dust off their old karaoke machines or maybe build their own or build their own. There you go.

**Pat**: That's a wrap.

---

# Karaoke

Date: 2024-09-19

URL: https://ddrscott.github.io/blog/2024/karaoke/

Tags: life

Description: How to leverage technology to win at singing!

TL;DR - Karaoke!

My 10-year-old had a singing competition coming up in 2 weeks. He had two songs to perfect, and one was in Latin. His teacher provided an audio recording of herself performing to use as a reference when she wasn't around. The recording was good enough, but I thought we could do better. Karaoke!

Traditional karaoke machines perform brute force audio filtering by clipping middle frequencies, clipping identical frequencies found in the left and right channel, or removing the center channel, but that's not good enough for my son. I need AI magic!

> If it's worth doing, it's worth overdoing!

> - Me

## Get It Done!

1. **Get a recording with accompaniment of each song.** ✅ (His teacher sent this before the project started)

2. **Find an open-source project that uses AI to segment music files.** [Spleeter](https://github.com/deezer/spleeter) ✅

3. **Create a notebook in Google Colab to take the library out for a spin.** ✅

4. **Glue the separate wav files back together into a single MP3 file.** ✅

5. **Create an API for the project.** (Screenshot below. I can't publish it since it costs $$$) ✅

6. **Create a [static site audio player](https://ddrscott.github.io/prompt-games/singing) that allows my son to mix in or out vocals as needed.** ✅

7. **PRACTICE!**

## Lessons Learned

The whole project stemmed from the thought: What can AI do for me? It taught me about the middle channel and filter

technique. It tried its best to implement some Python code using [librosa](https://librosa.org/doc/latest/index.html), but the output was never satisfactory. A simple web search discovered the [Spleeter project](https://github.com/deezer/spleeter), and ChatGPT was fairly good at implementing some snippets I could copy/paste.

I was impressed with its implementation of the JavaScript visualization in the audio player. It used [Flexbox](https://developer.mozilla.org/en-US/docs/Web/CSS/CSS_flexible_box_layout) predictably, but I had to interject to get the player on the bottom as a different component. The audio slider to mix the vocals and accompaniment was not trivial, and AI failed many, many times. I ultimately had to read the JS Audio API to figure out the nuances.

Overall, it was probably 80% AI-generated and 20% human-stitched and corrected. Time-wise, I think it would have been a wash regardless of AI. AI forced me to spend time troubleshooting unfamiliar code vs. traditionally reading unfamiliar docs.

- **Useful:** 5 of 5

- **Productizable:** 3 of 5

- **Bug-Free:** 2 of 5

- **Fun:** 5 of 5

## Screenshots

### Frontend for audio splitter

_I can't provide the URL since it costs $$$ to keep it alive_

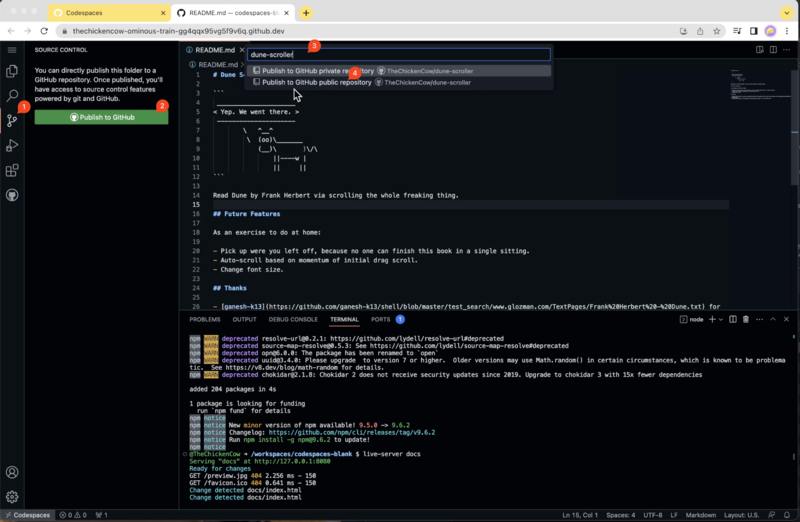

**TL;DR** - The goal is to ~understand how~ make a website. Enter Github and Codespaces!

## Steps

### Create a Github Account

I made one for my friend: TheChickenCow.

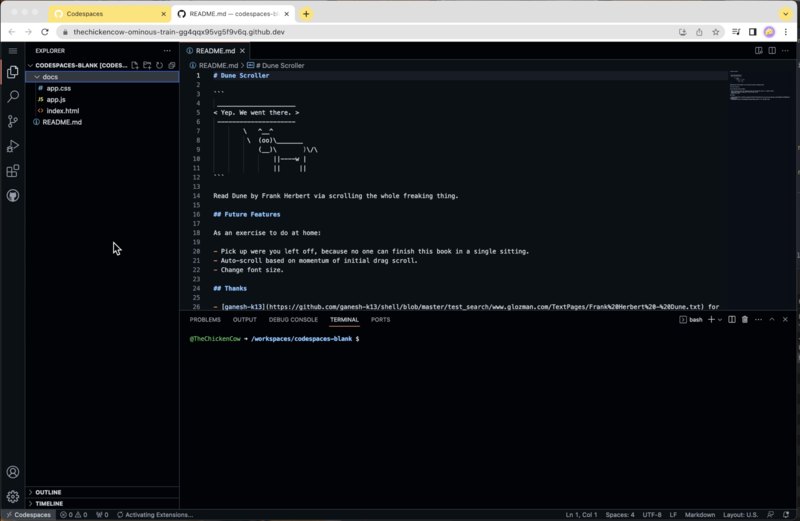

### Start a Codespaces

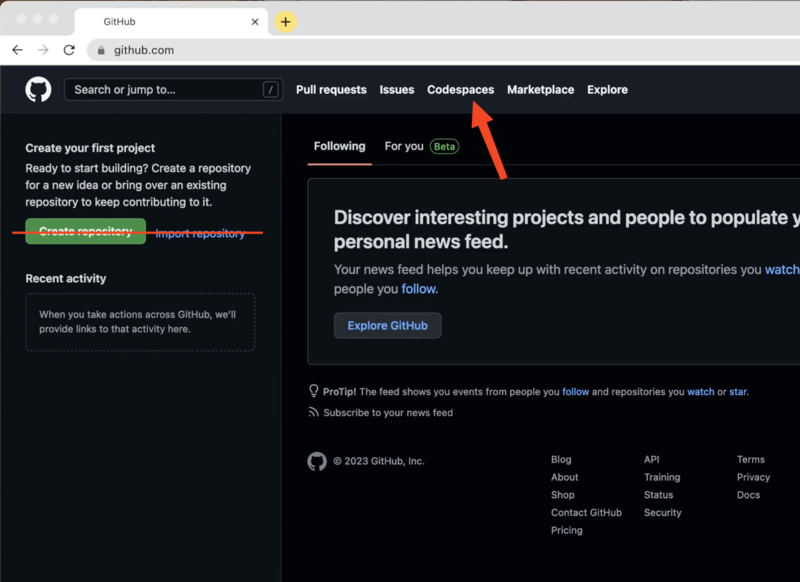

Use `Codespaces` instead of `Create Respository`.

**TL;DR** - The goal is to ~understand how~ make a website. Enter Github and Codespaces!

## Steps

### Create a Github Account

I made one for my friend: TheChickenCow.

### Start a Codespaces

Use `Codespaces` instead of `Create Respository`.

We'll eventually use Codespaces to push to the repository. Otherwise, it'll take more steps to import the repository into Codespaces. Trust us!

### Create the Content for the Website

We'll eventually use Codespaces to push to the repository. Otherwise, it'll take more steps to import the repository into Codespaces. Trust us!

### Create the Content for the Website

#### docs/index.html

This is the primary content of the webpage.

```html

#### docs/index.html

This is the primary content of the webpage.

```html

All files in the project will get automatically selected and hit `OK` to confirm the upload.

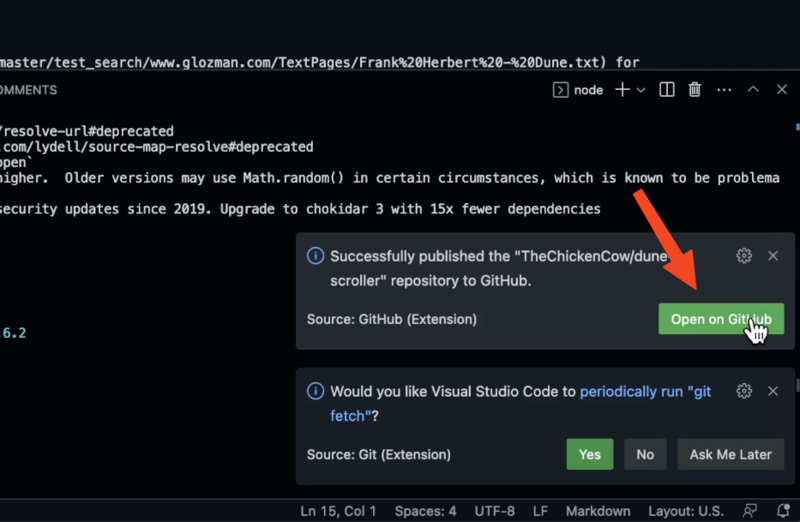

### Open on Github

Notice the notifications at the bottom of Codespaces. It will show the repository has been published to Github.

Select `Open on Github` to see the source code.

All files in the project will get automatically selected and hit `OK` to confirm the upload.

### Open on Github

Notice the notifications at the bottom of Codespaces. It will show the repository has been published to Github.

Select `Open on Github` to see the source code.

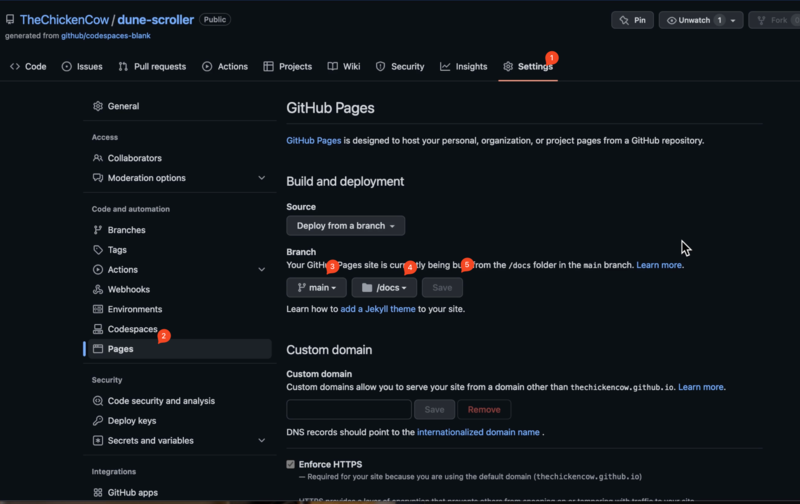

### Publish the Final Site

1. Select the `Settings` tab near the top.

2. Select the `Pages` tab in the left sidebar.

3. In the Branch section, choose `main`

4. Next to it, choose `/docs`

5. Finally, click `Save`

### Publish the Final Site

1. Select the `Settings` tab near the top.

2. Select the `Pages` tab in the left sidebar.

3. In the Branch section, choose `main`

4. Next to it, choose `/docs`

5. Finally, click `Save`

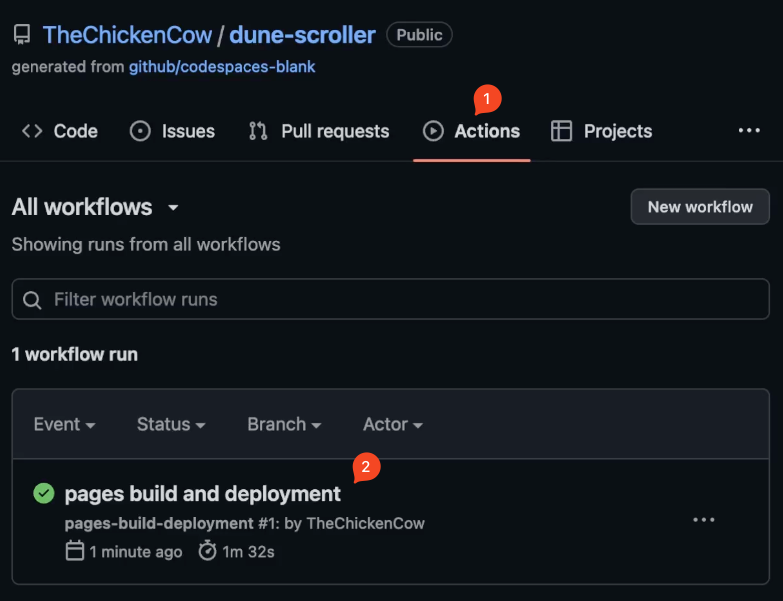

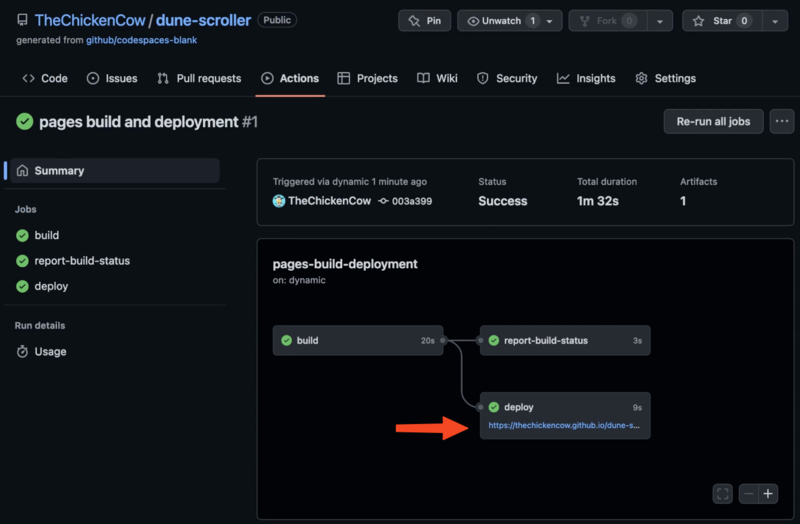

The publish will kick off a deployment Action.

### Wait about 2-minute

The initial deployment of the site take about 2 minutes.

Actions are observable in the top `Actions` tab.

1. Select `Actions` from the tab tab.

2. Select `pages build and deployment`

The publish will kick off a deployment Action.

### Wait about 2-minute

The initial deployment of the site take about 2 minutes.

Actions are observable in the top `Actions` tab.

1. Select `Actions` from the tab tab.

2. Select `pages build and deployment`

### Get the Link

When the deployment is finished, the `deploy` step

will reveal the final website link that you can share with friends

and family or the rest of the Internet.

### Get the Link

When the deployment is finished, the `deploy` step

will reveal the final website link that you can share with friends

and family or the rest of the Internet.

https://ddrscott.github.io/dune-scroller/

## Conclusion

We hope this step by step tutorial helped give you a start on your web development journey. We know this probably gave you more questions than answers, but the goal was to get you started and have a site at the end.

To learn more about Codespaces try their documentation: https://docs.github.com/en/codespaces

### Languages Used

- html

- css

- javascript

Learn about all 3 from https://www.w3schools.com/default.asp

## Connect

Let us know what you're working on or publish or stuck on in the comments below. Happy coding!

---

# Larry Pierce, aka Dad

Date: 2023-02-01

URL: https://ddrscott.github.io/blog/2023/larry-pierce-aka-dad/

Tags: life

Description: Lawrence Roy Pierce age 76, of Lake in the Hills, Illinois passed away on February 1, 2023, at Northwestern Medicine - Huntley Hospital.

```sh

cowsay "Best Dad I ever had. I'll miss you"

# ____________________________________

# < Best Dad I ever had. I'll miss you >

# ------------------------------------

# \ ^__^

# \ (oo)\_______

# (__)\ )\/\

# ||----w |

# || ||

```

## Computers Are Easy, Feelings Are Hard

Today, I received the news my Dad had passed away.

Two days ago he was hospitalized for falling.

https://ddrscott.github.io/dune-scroller/

## Conclusion

We hope this step by step tutorial helped give you a start on your web development journey. We know this probably gave you more questions than answers, but the goal was to get you started and have a site at the end.

To learn more about Codespaces try their documentation: https://docs.github.com/en/codespaces

### Languages Used

- html

- css

- javascript

Learn about all 3 from https://www.w3schools.com/default.asp

## Connect

Let us know what you're working on or publish or stuck on in the comments below. Happy coding!

---

# Larry Pierce, aka Dad

Date: 2023-02-01

URL: https://ddrscott.github.io/blog/2023/larry-pierce-aka-dad/

Tags: life

Description: Lawrence Roy Pierce age 76, of Lake in the Hills, Illinois passed away on February 1, 2023, at Northwestern Medicine - Huntley Hospital.

```sh

cowsay "Best Dad I ever had. I'll miss you"

# ____________________________________

# < Best Dad I ever had. I'll miss you >

# ------------------------------------

# \ ^__^

# \ (oo)\_______

# (__)\ )\/\

# ||----w |

# || ||

```

## Computers Are Easy, Feelings Are Hard

Today, I received the news my Dad had passed away.

Two days ago he was hospitalized for falling. When he retired 10 years ago, he got his first computer and a non-work email address so we could email him pictures from time to time.

He stayed on top of the news and current events through the TV and was just getting use to reading articles on [MSN](https://www.msn.com).

He used a landline as his primary number and had a voicemail machine.

His favorite feature of the landline was how the Caller-ID showed up on the TV before he answered it.

He always knew where the phone was located because it was attached to the wall.

Phones maybe smart, but no phone is wiser than Dad.

## Humor

My Dad loved to laugh, but couldn't tell a joke.

He had a boisterous one-of-a-kind laugh that went higher pitch during the inhale phase. (Note to self: turn his laugh into a ringtone)

He was a literal person that kept things simple.

All my jokes would go over his head. Sometimes he would look up to catch them.

## Adoption

We can't choose our parents, but my Dad chose me. I was 4 years old at the time, and after logistics, I finally came over at 5. He chose my sister, too.

Here we are around 1985.

When he retired 10 years ago, he got his first computer and a non-work email address so we could email him pictures from time to time.

He stayed on top of the news and current events through the TV and was just getting use to reading articles on [MSN](https://www.msn.com).

He used a landline as his primary number and had a voicemail machine.

His favorite feature of the landline was how the Caller-ID showed up on the TV before he answered it.

He always knew where the phone was located because it was attached to the wall.

Phones maybe smart, but no phone is wiser than Dad.

## Humor

My Dad loved to laugh, but couldn't tell a joke.

He had a boisterous one-of-a-kind laugh that went higher pitch during the inhale phase. (Note to self: turn his laugh into a ringtone)

He was a literal person that kept things simple.

All my jokes would go over his head. Sometimes he would look up to catch them.

## Adoption

We can't choose our parents, but my Dad chose me. I was 4 years old at the time, and after logistics, I finally came over at 5. He chose my sister, too.

Here we are around 1985.

He would take us bike riding around [Lake Opeka in Des Plaines, IL](https://web.archive.org/web/20230205141050/https://www.dpparks.org/parks-facilities/lake-park/). We would feed the ducks with stale bread. He'd encourage us to be outside by being outside with us.

## Fiscally Responsible

My Dad loved to save money. He kept the same wardrobe from his 40s and would only replace articles when they were overwarn, never to have more than what could fit into a small closet. I believe his only real source of new clothing were from gifts.

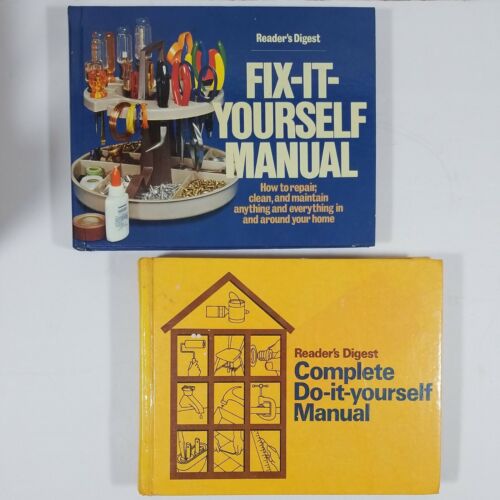

He would try to fix everything himself and with moderate success. Our Internet was "The Readers Digest Fix-It Yourself Manual" and "Complete Do-It Yourself Manual".

He would take us bike riding around [Lake Opeka in Des Plaines, IL](https://web.archive.org/web/20230205141050/https://www.dpparks.org/parks-facilities/lake-park/). We would feed the ducks with stale bread. He'd encourage us to be outside by being outside with us.

## Fiscally Responsible

My Dad loved to save money. He kept the same wardrobe from his 40s and would only replace articles when they were overwarn, never to have more than what could fit into a small closet. I believe his only real source of new clothing were from gifts.

He would try to fix everything himself and with moderate success. Our Internet was "The Readers Digest Fix-It Yourself Manual" and "Complete Do-It Yourself Manual".

Our Youtube was [This Old House hosted by Bob Vila](https://en.wikipedia.org/wiki/This_Old_House).

## Hard Worker

My Dad was a hard worker. He got early and came home late. He went back to school after realizing a B.A. in Political Science was a dead end. We worked at companies until they [closed down](https://en.wikipedia.org/wiki/A._B._Dick_Company) or he [retired](https://www.packaging-by-design.com). Loyal to the end, he even came out of retirement to help train the next generation.

## Retirement

In his retirement, he preferred to stay around town, visit grand kids, make sandwiches, and catch up on TV shows, movies, and the news. A simple man with simple pleasure.

Here we are 30+ years later in Harvard, IL:

Our Youtube was [This Old House hosted by Bob Vila](https://en.wikipedia.org/wiki/This_Old_House).

## Hard Worker

My Dad was a hard worker. He got early and came home late. He went back to school after realizing a B.A. in Political Science was a dead end. We worked at companies until they [closed down](https://en.wikipedia.org/wiki/A._B._Dick_Company) or he [retired](https://www.packaging-by-design.com). Loyal to the end, he even came out of retirement to help train the next generation.

## Retirement

In his retirement, he preferred to stay around town, visit grand kids, make sandwiches, and catch up on TV shows, movies, and the news. A simple man with simple pleasure.

Here we are 30+ years later in Harvard, IL:

And here's the final picture I have with him and Morgan.

And here's the final picture I have with him and Morgan.

## Timeline

* 1946-11-15 - Born

* 2002-12-31 - Baptized in the name of the Father, Son, and Holy Spirit

* 2023-02-01 - Meets Jesus

## Official Obituary

Lawrence Roy "Larry" Pierce age 76, of Lake in the Hills, Illinois passed away on February 1, 2023, at Northwestern Medicine – Huntley Hospital.

Larry was a supportive loving father that enjoyed hikes, bike riding and traveling. He lived a simple life and indulged in simple pleasures like books and movies. Although he couldn't tell a joke, he had a one-of-a-kind laugh.

He was born in Chicago, Illinois on November 15, 1946, the loving son of Archie Roy and Grace (Thoring) Pierce. Larry is survived by his children, Scott (Karen) Pierce, Mark Pierce, and Julie Pierce; his grandchildren, Nathan, Morgan, Jeremy, and Kaili; his brother, Steve (Nancy) Pierce.

## Not The End

This is only one side of my Dad's story and from one narrow perspective.

I'm planning on having a more historical/objective write up soon.

But in the meantime, please comment if Larry has impacted your life in anyway.

Cheers to the best Dad I ever had!

## Timeline

* 1946-11-15 - Born

* 2002-12-31 - Baptized in the name of the Father, Son, and Holy Spirit